Generalized linear model

In statistics, the generalized linear model (GLM) is a flexible generalization of ordinary linear regression. The GLM generalizes linear regression by allowing the linear model to be related to the response variable via a link function and by allowing the magnitude of the variance of each measurement to be a function of its predicted value.

Generalized linear models were formulated by John Nelder and Robert Wedderburn as a way of unifying various other statistical models, including linear regression, logistic regression and Poisson regression.[1] They proposed an iteratively reweighted least squares method for maximum likelihood estimation of the model parameters. Maximum-likelihood estimation remains popular and is the default method on many statistical computing packages. Other approaches, including Bayesian approaches and least squares fits to variance stabilized responses, have been developed.

Contents |

Overview

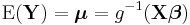

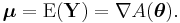

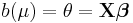

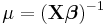

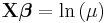

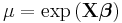

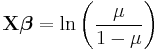

In a GLM, each outcome of the dependent variables, Y, is assumed to be generated from a particular distribution in the exponential family, a large range of probability distributions that includes the normal, binomial and poisson distributions, among others. The mean, μ, of the distribution depends on the independent variables, X, through:

where E(Y) is the expected value of Y; Xβ is the linear predictor, a linear combination of unknown parameters, β; g is the link function.

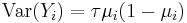

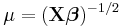

In this framework, the variance is typically a function, V, of the mean:

It is convenient if V follows from the exponential family distribution, but it may simply be that the variance is a function of the predicted value.

The unknown parameters, β, are typically estimated with maximum likelihood, maximum quasi-likelihood, or Bayesian techniques.

Model components

The GLM consists of three elements:

- 1. A probability distribution from the exponential family.

- 2. A linear predictor η = Xβ .

- 3. A link function g such that E(Y) = μ = g-1(η).

Probability distribution

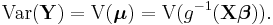

The overdispersed exponential family of distributions is a generalization of the exponential family and exponential dispersion model of distributions and includes those probability distributions, parameterized by  and

and  , whose density functions f (or probability mass function, for the case of a discrete distribution) can be expressed in the form

, whose density functions f (or probability mass function, for the case of a discrete distribution) can be expressed in the form

, called the dispersion parameter, typically is known and is usually related to the variance of the distribution. The functions

, called the dispersion parameter, typically is known and is usually related to the variance of the distribution. The functions  ,

,  ,

,  ,

,  , and

, and  are known. Many, although not all, common distributions are in this family.

are known. Many, although not all, common distributions are in this family.

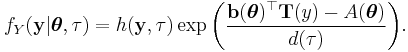

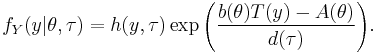

For scalar  and

and  , this reduces to

, this reduces to

is related to the mean of the distribution. If

is related to the mean of the distribution. If  is the identity function, then the distribution is said to be in canonical form (or natural form). Note that any distribution can be converted to canonical form by rewriting

is the identity function, then the distribution is said to be in canonical form (or natural form). Note that any distribution can be converted to canonical form by rewriting  as

as  and then applying the transformation

and then applying the transformation  . It is always possible to convert

. It is always possible to convert  in terms of the new parametrization, even if

in terms of the new parametrization, even if  is not a one-to-one function; see comments in the page on the exponential family. If, in addition,

is not a one-to-one function; see comments in the page on the exponential family. If, in addition,  is the identity and

is the identity and  is known, then

is known, then  is called the canonical parameter (or natural parameter) and is related to the mean through

is called the canonical parameter (or natural parameter) and is related to the mean through

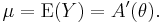

For scalar  and

and  , this reduces to

, this reduces to

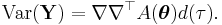

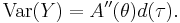

Under this scenario, the variance of the distribution can be shown to be[2]

For scalar  and

and  , this reduces to

, this reduces to

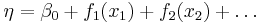

Linear predictor

The linear predictor is the quantity which incorporates the information about the independent variables into the model. The symbol η (Greek "eta") is typically used to denote a linear predictor. It is related to the expected value of the data (thus, "predictor") through the link function.

η is expressed as linear combinations (thus, "linear") of unknown parameters β. The coefficients of the linear combination are represented as the matrix of independent variables X. η can thus be expressed as

The elements of X are either measured by the experimenters or stipulated by them in the modeling design process.

Link function

The link function provides the relationship between the linear predictor and the mean of the distribution function. There are many commonly used link functions, and their choice can be somewhat arbitrary. It can be convenient to match the domain of the link function to the range of the distribution function's mean.

When using a distribution function with a canonical parameter  , the canonical link function is the function that expresses

, the canonical link function is the function that expresses  in terms of

in terms of  , i.e.

, i.e.  . For the most common distributions, the mean

. For the most common distributions, the mean  is one of the parameters in the standard form of the distribution's density function, and then

is one of the parameters in the standard form of the distribution's density function, and then  is the function as defined above that maps the density function into its canonical form. When using the canonical link function,

is the function as defined above that maps the density function into its canonical form. When using the canonical link function,  , which allows

, which allows  to be a sufficient statistic for

to be a sufficient statistic for  .

.

Following is a table of canonical link functions and their inverses (sometimes referred to as the mean function, as done here) used for several distributions in the exponential family.

| Distribution | Name | Link Function | Mean Function |

|---|---|---|---|

| Normal | Identity |  |

|

| Exponential | Inverse |  |

|

| Gamma | |||

| Inverse Gaussian |

Inverse squared |

|

|

| Poisson | Log |  |

|

| Binomial | Logit |  |

|

| Multinomial |

In the cases of the exponential and gamma distributions, the domain of the canonical link function is not the same as the permitted range of the mean. In particular, the linear predictor may be negative, which would give an impossible negative mean. When maximizing the likelihood, precautions must be taken to avoid this. An alternative is to use a noncanonical link function.

Fitting

Maximum likelihood

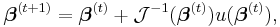

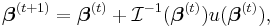

The maximum likelihood estimates can be found using an iteratively reweighted least squares algorithm using either a Newton–Raphson method with updates of the form:

where  is the observed information matrix (the negative of the Hessian matrix) and

is the observed information matrix (the negative of the Hessian matrix) and  is the score function; or a Fisher's scoring method:

is the score function; or a Fisher's scoring method:

where  is the Fisher information matrix. Note that if the canonical link function is used, then the two methods are the same.[3]

is the Fisher information matrix. Note that if the canonical link function is used, then the two methods are the same.[3]

Bayesian methods

In general, the posterior distribution cannot be found in closed form and so must be approximated, usually using Laplace approximations or some type of Markov chain Monte Carlo method such as Gibbs sampling.

Examples

General linear models

A possible point of confusion has to do with the distinction between generalized linear models and the general linear model, two broad statistical models. The general linear model may be viewed as a case of the generalized linear model with identity link. As most exact results of interest are obtained only for the general linear model, the general linear model has undergone a somewhat longer historical development. Results for the generalized linear model with non-identity link are asymptotic (tending to work well with large samples).

Linear regression

A simple, very important example of a generalized linear model (also an example of a general linear model) is linear regression. In linear regression, the use of the least-squares estimator is justified by the Gauss-Markov theorem, which does not assume that the distribution is normal.

From the perspective of generalized linear models, however, it is useful to suppose that the distribution function is the normal distribution with constant variance and the link function is the identity, which is the canonical link if the variance is known.

For the normal distribution, the generalized linear model has a closed form expression for the maximum-likelihood estimates, which is convenient. Most other GLMs lack closed form estimates.

Binomial data

When the response data, Y, are binary (taking on only values 0 and 1), the distribution function is generally chosen to be the binomial distribution and the interpretation of μi is then the probability, p, of Yi taking on the value one.

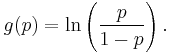

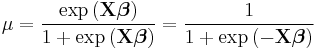

There are several popular link functions for binomial functions; the most typical is the canonical logit link:

GLMs with this setup are logistic regression models.

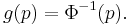

In addition, the inverse of any continuous cumulative distribution function (CDF) can be used for the link since the CDF's range is ![[0,1]](/2012-wikipedia_en_all_nopic_01_2012/I/ccfcd347d0bf65dc77afe01a3306a96b.png) , the range of the binomial mean. The normal CDF

, the range of the binomial mean. The normal CDF  is a popular choice and yields the probit model. Its link is

is a popular choice and yields the probit model. Its link is

The complementary log-log function log(−log(1−p)) may also be used. This link function is asymmetric and will often produce different results from the probit and logit link functions.

The identity link is also sometimes used for binomial data to yield the linear probability model, but a drawback of this model is that the predicted probabilities can be greater than one or less than zero. In implementation it is possible to fix the nonsensical probabilities outside of ![[0,1]](/2012-wikipedia_en_all_nopic_01_2012/I/ccfcd347d0bf65dc77afe01a3306a96b.png) , but interpreting the coefficients can be difficult. The model's primary merit is that near

, but interpreting the coefficients can be difficult. The model's primary merit is that near  it is approximately a linear transformation of the probit and logit―econometricians sometimes call this the Harvard model.

it is approximately a linear transformation of the probit and logit―econometricians sometimes call this the Harvard model.

The variance function for binomial data is given by:

where the dispersion parameter τ is typically fixed at exactly one. When it is not, the resulting quasi-likelihood model often described as binomial with overdispersion or quasibinomial.

Count data

Another example of generalized linear models includes Poisson regression which models count data using the Poisson distribution. The link is typically the logarithm, the canonical link.

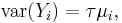

The variance function is proportional to the mean

where the dispersion parameter τ is typically fixed at exactly one. When it is not, the resulting quasi-likelihood model is often described as poisson with overdispersion or quasipoisson.

Extensions

The standard GLM assumes that the observations are uncorrelated. Extensions have been developed to allow for correlation between observations, as occurs for example in longitudinal studies and clustered designs:

- Generalized estimating equations (GEEs) allow for the correlation between observations without the use of an explicit probability model for the origin of the correlations, so there is no explicit likelihood. They are suitable when the random effects and their variances are not of inherent interest, as they allow for the correlation without explaining its origin. The focus is on estimating the average response over the population ("population-averaged" effects) rather than the regression parameters that would enable prediction of the effect of changing one or more components of X on a given individual. GEEs are usually used in conjunction with Huber-White standard errors.[4][5]

- Generalized linear mixed models (GLMMs) are an extension to GLMs that includes random effects in the linear predictor, giving an explicit probability model that explains the origin of the correlations. The resulting "subject-specific" parameter estimates are suitable when the focus is on estimating the effect of changing one or more components of X on a given individual. GLMMs are a particular type of multilevel model (mixed model). In general, fitting GLMMs is more computationally complex and intensive than fitting GEEs.

- Hierarchical generalized linear models (HGLMs) are similar to GLMMs apart from two distinctions:

-

- The random effects can have any distribution in the exponential family, whereas current GLMMs nearly always have normal random effects;

- They are not as computationally intensive, as instead of integrating out the random effects they are based on a modified form of likelihood known as the hierarchical likelihood or h-likelihood.

- The theoretical basis and accuracy of the methods used in HGLMs have been the subject of some debate in the statistical literature.[6]

Generalized additive models

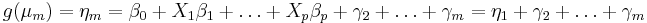

Generalized additive models (GAMs) are another extension to GLMs in which the linear predictor η is not restricted to be linear in the covariates X but is the sum of smoothing functions applied to the xis:

The smoothing functions fi are estimated from the data. In general this requires a large number of data points and is computationally intensive.[7][8]

Multinomial regression

The binomial case may be easily extended to allow for a multinomial distribution as the response (also, a Generalized Linear Model for counts, with a constrained total). There are two ways in which this is usually done:

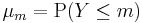

Ordered response

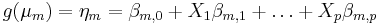

If the response variable is an ordinal measurement, then one may fit a model function of the form:

where

where  .

.

for m > 2. Different links g lead to proportional odds models or ordered probit models.

Unordered response

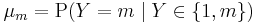

If the response variable is a nominal measurement, or the data do not satisfy the assumptions of an ordered model, one may fit a model of the following form:

where

where  .

.

for m > 2. Different links g lead to multinomial logit or multinomial probit models. These are less efficient than the ordered response models, as more parameters are estimated.

Confusion with general linear models

The term "generalized linear model", and especially its abbreviation GLM, can be confused with general linear model. John Nelder has expressed regret about this in a conversation with Stephen Senn:

Senn: I must confess to having some confusion when I was a young statistician between general linear models and generalized linear models. Do you regret the terminology?

Nelder: I think probably I do. I suspect we should have found some more fancy name for it that would have stuck and not been confused with the general linear model, although general and generalized are not quite the same. I can see why it might have been better to have thought of something else.[9]

See also

- Comparison of general and generalized linear models

- Generalized linear array model

- Tweedie distributions

- GLIM (software)

Notes

- ^ Nelder, John; Wedderburn, Robert (1972). "Generalized Linear Models". Journal of the Royal Statistical Society. Series A (General) (Blackwell Publishing) 135 (3): 370–384. doi:10.2307/2344614. JSTOR 2344614.

- ^ McCullagh and Nelder (1989), Chapter 2.

- ^ McCullagh and Nelder (1989), Page 43.

- ^ Zeger, Scott L.; Liang, Kung-Yee; Albert, Paul S. (1988). "Models for Longitudinal Data: A Generalized Estimating Equation Approach". Biometrics (International Biometric Society) 44 (4): 1049–1060. doi:10.2307/2531734. JSTOR 2531734. PMID 3233245.

- ^ Hardin, James; Hilbe, Joseph (2003). Generalized Estimating Equations. London: Chapman and Hall/CRC. ISBN 1584883073.

- ^ Lee, Youngjo; Nelder, John; Pawitan, Yudi (2006). Generalized Linear Models with Random Effects: Unified Analysis via H-likelihood. Chapman & Hall/CRC. ISBN 1584886315.

- ^ Hastie, T. J.; Tibshirani, R. J. (1990). Generalized Additive Models. Chapman & Hall/CRC. ISBN 9780412343902.

- ^ Wood, Simon (2006). Generalized Additive Models: An Introduction with R. Chapman & Hall/CRC. ISBN 1-584-88474-6.

- ^ Senn, Stephen (2003). "A conversation with John Nelder". Statistical Science 18 (1): 118–131. doi:10.1214/ss/1056397489. http://projecteuclid.org/euclid.ss/1056397489.

References

- McCullagh, Peter; Nelder, John (1989). Generalized Linear Models, Second Edition. Boca Raton: Chapman and Hall/CRC. ISBN 0-412-31760-5.

Further reading

- Dobson, A.J.; Barnett, A.G. (2008). Introduction to Generalized Linear Models (3rd ed.). Boca Raton, FL: Chapman and Hall/CRC. ISBN 1584881658.

- Hardin, James; Hilbe, Joseph (2007). Generalized Linear Models and Extensions (2nd ed.). College Station: Stata Press. ISBN 1597180149.

External links

- Systems Analysis, Modelling and Prediction (SAMP), University of Oxford

- Open-source MATLAB code for GLM fitting.

- John Nelder FRS

- Royal Society citation for Nelder

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||